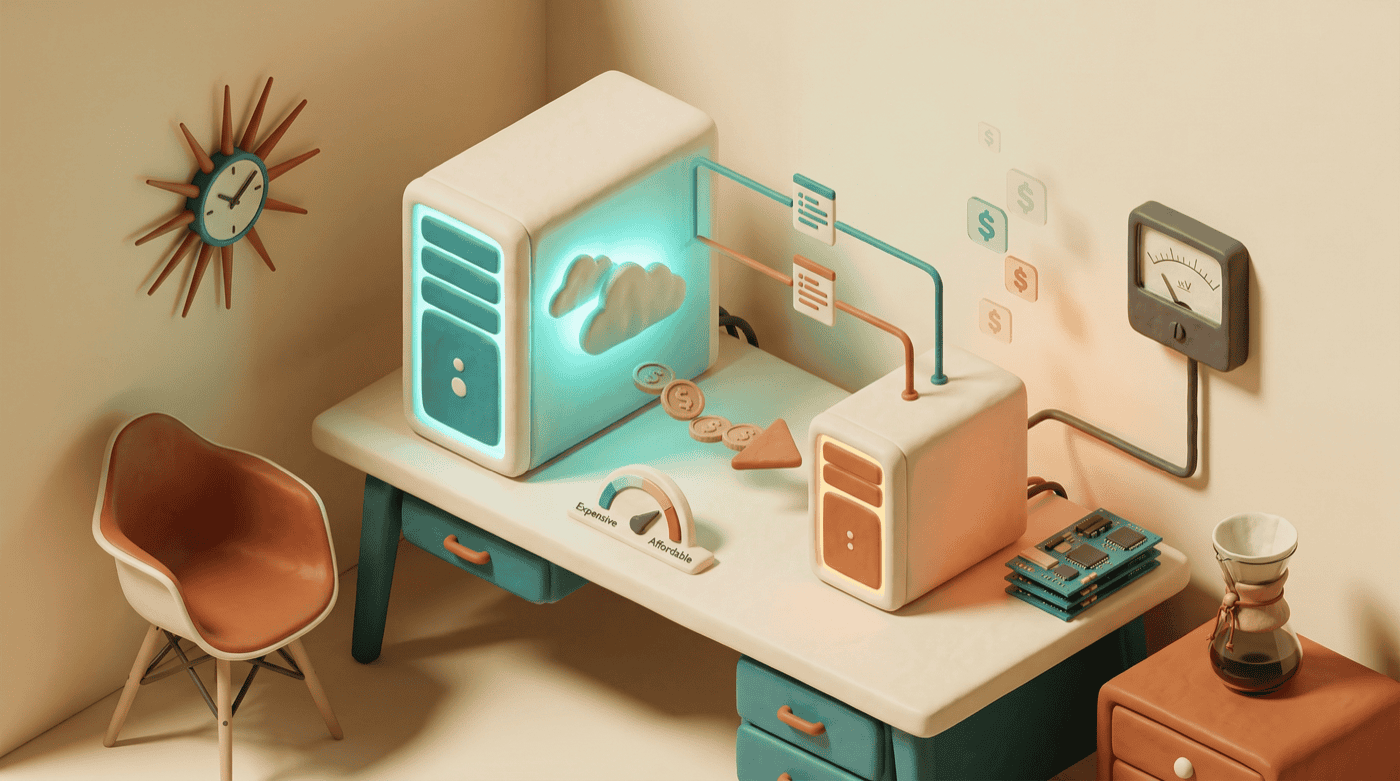

Setting Up Local LLMs to Reduce API Costs

API costs add up. Every classification, every summary, every boilerplate draft — each one burns tokens on a cloud model that’s overqualified for the job. It’s like hiring a senior architect to sort your mail.

The fix is straightforward: run a smaller model on your own machine for the mechanical stuff, and save the cloud API budget for work that actually requires reasoning. A 7-billion parameter model running locally handles summarization, classification, data extraction, and first drafts surprisingly well — and costs nothing per token after you’ve downloaded it.

By the end of this guide, you’ll have a local model running on your machine, know which tasks to route to it, and have a clear mental model for when local is the right call versus when you need the cloud.

What you’ll need

- A machine with at least 8GB of RAM — 16GB is better if you want to run larger models. Apple Silicon Macs are particularly good at this because the unified memory architecture lets the GPU access all available RAM.

- Basic terminal comfort — you’ll be running a few commands, nothing complex.

- Ollama — the tool that makes all of this painless. If you don’t have it yet, we’ll install it in the first step.

Install Ollama

Ollama wraps the complexity of running local models into a few simple commands. No Python environments, no dependency management, no CUDA configuration.

macOS (Homebrew):

brew install ollamaLinux:

curl -fsSL https://ollama.com/install.sh | shWindows: Download the installer from ollama.com.

Once installed, start the Ollama service:

ollama serveOn macOS, this runs in the background automatically after installation. On Linux, you may want to set it up as a systemd service if you plan to use it regularly.

You can verify it’s running by hitting the API endpoint:

curl http://localhost:11434/api/tagsYou should see a JSON response — probably an empty model list, since you haven’t pulled anything yet.

Pull your first model

Ollama uses a Docker-like pull model. You download a model once, and it’s available locally from then on.

For a solid starting point:

ollama pull qwen2.5-coder:7bThis downloads Qwen 2.5 Coder at the 7B parameter size — roughly a 4.5GB download. It’s a capable coding model that runs comfortably on 8GB of RAM and is fast on Apple Silicon.

Once the download finishes, test it:

ollama run qwen2.5-coder:7bThis opens an interactive chat. Type a question, get a response. Type /bye to exit. The first response takes a moment while the model loads into memory, but subsequent responses are fast.

Picking the right model for the job

Not all models are interchangeable, and size matters more than you’d think. Here’s what I’d recommend depending on your hardware and use case:

| Model | Size | RAM Needed | Good For |

|---|---|---|---|

llama3.2:3b | ~2GB | 8GB | Classification, quick categorization, yes/no decisions |

qwen2.5-coder:7b | ~4.5GB | 8GB | Code generation, summarization, general-purpose tasks |

deepseek-coder-v2:16b | ~9GB | 16GB | Higher-quality code output, complex extraction |

Start with qwen2.5-coder:7b. It hits the sweet spot between quality and speed for most mechanical tasks. If you find yourself wanting better output and have the RAM, pull deepseek-coder-v2:16b. If you just need fast classification and don’t care about prose quality, llama3.2:3b loads almost instantly.

Pull any of them the same way:

ollama pull llama3.2:3b

ollama pull deepseek-coder-v2:16bYou can have multiple models installed simultaneously and switch between them.

Know what goes where

Here’s where most people go wrong with local models: they try to use them for everything, get disappointed by the quality, and go back to cloud-only. The trick is knowing which tasks to route where.

Route locally — mechanical tasks with clear inputs and outputs:

- Summarizing content (meeting notes, articles, research)

- Classifying or categorizing items (emails, support tickets, data)

- Writing initial drafts of boilerplate (docstrings, README sections, form emails)

- Extracting structured data from unstructured text

- Text transformation (formatting changes, style conversion)

- Simple code generation with well-defined specs

Keep on the cloud — tasks that need reasoning or judgment:

- Complex multi-step reasoning

- Anything security-sensitive (the cloud model has guardrails you probably want)

- Tasks that require calling other tools in a chain

- Voice and style judgment (your brand voice, editorial decisions)

- Novel problem-solving where you need the model’s full capability

- Architecture decisions and code review

The mental model is simple: if you could write a clear, specific prompt and the output is either right or wrong — with no subjective quality gradient — it’s a local task. If you need the model to think, keep it on the cloud.

Put it to work

Ollama exposes an OpenAI-compatible API at http://localhost:11434. This means you can use it as a drop-in replacement in most tools that support custom endpoints.

Here’s a basic example using curl:

curl http://localhost:11434/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"model": "qwen2.5-coder:7b",

"messages": [

{

"role": "user",

"content": "Classify this email as: action-needed, fyi, or spam.\n\nSubject: Q3 budget review meeting\nBody: Hi team, please review the attached budget before Friday."

}

]

}'The response format matches OpenAI’s API, so existing code that talks to GPT models works with minimal changes — swap the base URL and model name.

For a more practical pattern, here’s a simple bash function you can drop into your scripts:

local_llm() {

curl -s http://localhost:11434/v1/chat/completions \

-H "Content-Type: application/json" \

-d "{

\"model\": \"qwen2.5-coder:7b\",

\"messages\": [{\"role\": \"user\", \"content\": \"$1\"}]

}" | jq -r '.choices[0].message.content'

}

# Usage:

local_llm "Summarize this in one sentence: $(cat meeting-notes.txt)"The draft-first pattern

One workflow that’s worked well in my testing: let the local model write the first draft of something, then hand it to a cloud model for refinement. The local model does the grunt work of getting words on the page, and the cloud model applies judgment and polish.

This works well for documentation, commit messages, code comments, and any writing task where starting from a blank page is the hard part. The local draft gives the cloud model something to react to, which is faster and cheaper than asking the cloud model to generate from scratch.

What you’ll actually see

After running this setup for a while, a few things become clear.

Speed is the surprise. A 7B model on Apple Silicon responds in under a second for short prompts. There’s no network latency, no queue, no rate limiting. For tasks like classification where you’re processing a batch of items, the speed difference is significant.

Quality has a ceiling. Local models handle well-defined tasks competently, but they struggle with anything ambiguous or requiring broad knowledge. I once routed a newsletter draft to a 7B model thinking it would save time on a first pass. What came back was technically a draft, but it had all the personality of a terms-of-service page — every sentence the same length, every paragraph structured identically. The cloud model would have matched my voice on the first try. A classification prompt that works well on GPT-4 may need more explicit examples to get the same accuracy from a 7B model. Expect to spend a bit more time on prompt engineering for local models — being specific pays off more when the model is smaller.

Memory matters. While a model is loaded, it occupies RAM. Running qwen2.5-coder:7b while also running your IDE, a browser with 40 tabs, and Docker containers is going to feel tight on a 16GB machine. Ollama unloads models after a period of inactivity (5 minutes by default), so this is mostly an issue during active use.

The cost savings are real but indirect. You won’t see a dramatic drop in your API bill overnight — the expensive calls are usually the complex reasoning tasks you’re still sending to the cloud. What changes is that you stop hesitating to automate small tasks because the per-call cost is zero. That classification script you wouldn’t write because it would process 500 items at $0.02 each? Now it’s free. The cumulative effect of removing that cost friction adds up over weeks.

Limitations worth knowing

Local models are smaller by a wide margin. The largest model most people can run locally (around 70B parameters) is still an order of magnitude smaller than frontier cloud models. For the mechanical tasks we’re routing here, that gap doesn’t matter much, but it’s worth keeping in mind if you’re tempted to push the boundary of what “mechanical” means.

There’s no tool calling built in. If your workflow involves the model deciding which tools to use and calling them in sequence, that’s still cloud territory. Local models take text in and produce text out — nothing more.

Ollama is single-user by design. If you’re thinking about sharing a local model across a team, you’ll want to look at something more production-oriented. For personal workflow automation, Ollama is the right tool.

Where to go from here

- Integrate with Claude Code — if you use Claude Code, you can connect your local Ollama instance via an MCP server, letting Claude route mechanical subtasks to the local model automatically during sessions.

- Build a routing layer — once you’re comfortable with which tasks go where, consider writing a simple script or function that decides automatically based on task type.

- Experiment with model sizes — pull a few different models and run the same prompts against them. The quality-speed tradeoff varies by task, and ten minutes of testing saves hours of working with the wrong model.

- Watch your API dashboard — after a month of routing mechanical tasks locally, check your cloud API usage. The pattern of what remains is informative — those are the tasks that genuinely need the bigger model.