Automating Your Publishing Pipeline with AI

Publishing content involves a dozen steps between “I have an idea” and “it’s live.” Writing, formatting, generating images, validating metadata, building the site, deploying, emailing subscribers, posting to social. Most people do each step by hand, every time.

That’s fine when you publish once a month. When you’re shipping weekly — a newsletter, a blog post, both — the manual steps start eating hours you’d rather spend on the actual writing. Worse, they introduce errors: a missing hero image path, a broken frontmatter field, a deploy that fails because you forgot to run the build first.

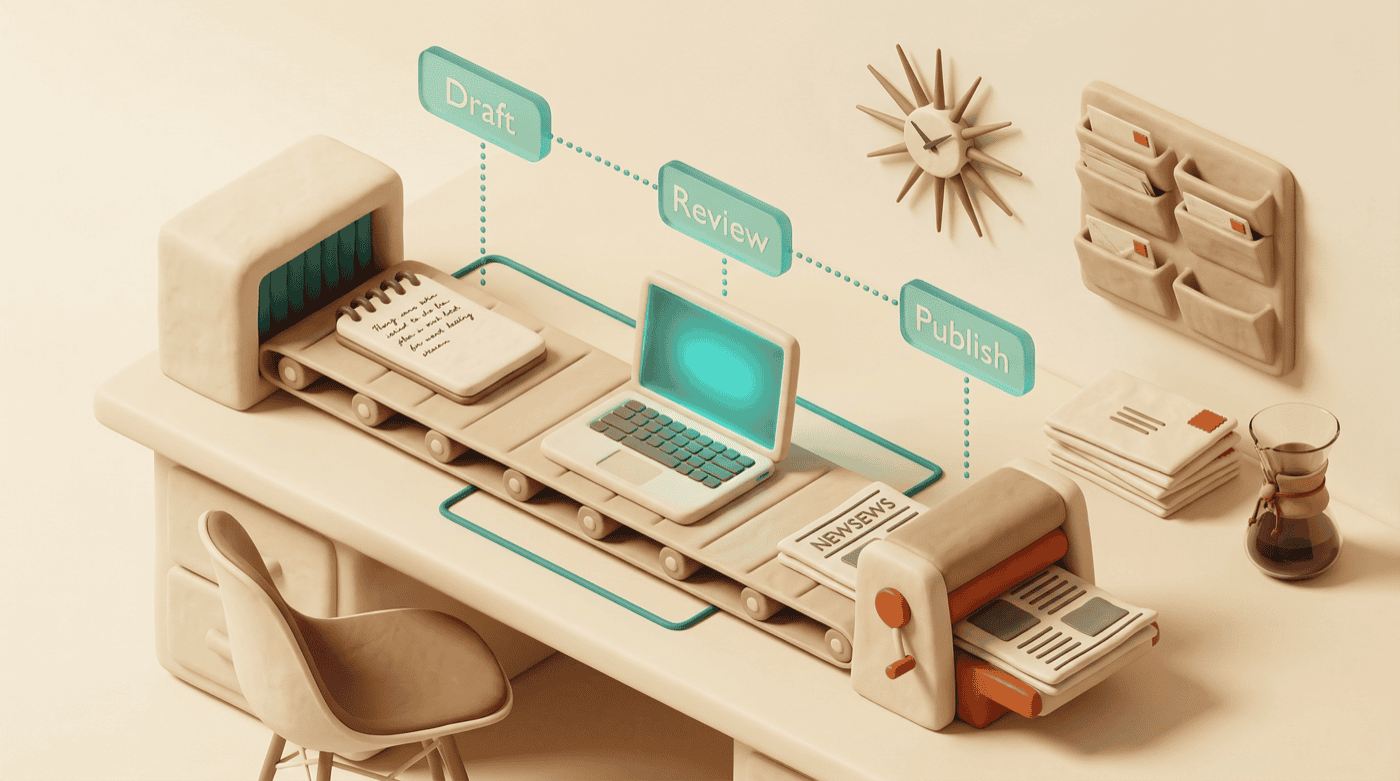

The fix is a pipeline — a series of discrete, repeatable stages that you can run with a command instead of a checklist. By the end of this guide, you’ll have the structure for an end-to-end publishing pipeline, from rough draft to live site and sent newsletter. You won’t build every stage today, and that’s the point. You’ll start with what hurts most and add stages as you need them.

What you’ll need

- Claude Code — installed and working. Anthropic’s installation guide if you’re not set up yet.

- A static site generator — this guide uses Astro for examples, but the pipeline pattern works with Hugo, Next.js, Eleventy, or anything that builds from markdown.

- A hosting target — somewhere to deploy. Could be a VPS with rsync, Netlify, Vercel, Cloudflare Pages, or even GitHub Pages.

- An email platform (optional) — Kit, Mailchimp, Buttondown, or similar, if your pipeline includes a newsletter.

- Basic comfort with the command line — you’ll be running build commands, not clicking through GUIs.

The pipeline, stage by stage

Think of this as six stations on an assembly line. Each one takes a specific input, does a specific job, and hands off to the next. You don’t need to build the whole factory on day one — start with two stages and bolt on the rest over weeks or months.

Stage 1: Drafting — the rough-then-refined pattern

The fastest way to get a first draft: let a local model do the rough work, then bring in a more capable model for refinement.

Here’s how that looks in practice:

# Local model generates a rough draft from your outline

cat outline.md | ollama run qwen2.5-coder:7b \

"Write a first draft blog post from this outline. Keep it conversational."

# Save the output, then refine with Claude Code

claude "Revise this draft to match the voice profile in VOICE.md.

Tighten the opening, cut filler, and make the examples specific."The local model doesn’t need to be good at voice or style — it just needs to turn bullet points into paragraphs. That’s mechanical work, and a 7B model handles it fine. The cloud model does the editorial pass: voice consistency, structural tightening, the judgment calls that actually matter. Grunt work local, taste in the cloud.

If you’re using Claude Code with a CLAUDE.md file that includes your voice profile and project conventions, the refinement step gets better every time. The model already knows your preferences — it just needs the raw material to work with.

What you can automate here: A Claude Code custom command (slash command) that takes an outline file as input and runs the two-step draft process. One command, both stages.

Stage 2: Review — catching what you can’t see yourself

You just wrote the thing. You’re the worst person to review it. Your brain fills in missing words, smooths over awkward phrasing, and absolutely will not notice that you used “robust” three times in four paragraphs.

Automated review catches two categories of problems:

Voice drift and AI patterns. If you used AI assistance during drafting (and you did — that’s the point of Stage 1), the output will carry fingerprints. Phrases like “it’s worth noting” or “moreover” or the dreaded “let’s dive in.” A slop detection pass scans the draft against a list of known AI-generated patterns and flags them for removal.

Structural issues. Missing sections, frontmatter fields that don’t match what your site expects, broken links, images referenced but not present. These are the things you’d catch on the third proof-read, except you’re not going to do a third proof-read because it’s Wednesday night and you want to publish.

In Claude Code, this can be a custom skill that reads the draft, runs it against your voice profile and a slop pattern list, validates the frontmatter schema, and checks that referenced assets exist on disk. The skill outputs a list of specific edits — and if you’ve set it up right, makes the edits directly rather than just recommending them.

# Example: a pre-publish review command

/review-draft src/content/blog/my-new-post.mdThe review should produce an edited file plus a short report of what changed and why. If it’s only producing recommendations without applying them, you’ve built a critic, not an editor. Build editors.

Stage 3: Asset generation — images that don’t break the build

Every blog post needs a hero image. Maybe an OG image for social sharing. If you have a consistent visual style — claymorphic, sketch, photo-realistic, whatever — generating these from article content is straightforward with image generation APIs or tools like DALL-E, Midjourney, or local models.

Here’s the part that trips people up: compression and placement.

A raw AI-generated PNG can easily be 5-8MB. Your site doesn’t want that. Run it through compression before it lands in your assets directory:

# Compress to web-friendly size

pngquant --quality=65-80 --output hero.png input.pngAnd the file needs to land in the right place with the right name, because your frontmatter is pointing at a specific path. If the hero image is at https://assets.jimchristian.net/son/blog/2026/my-post/hero.png but the file is sitting on your desktop as generated-image-v3-final-FINAL.png, the build will succeed but the page will show a broken image.

What you can automate here: A command that generates the hero image from the article title and description, compresses it, and moves it to the path specified in the frontmatter. No manual file shuffling, no forgotten compression step.

Stage 4: Frontmatter and SEO pre-flight

Static site generators are unforgiving about metadata. A missing pubDate in your frontmatter silently breaks your RSS feed. A heroImage path pointing to a file that doesn’t exist gives you a blank space where your image should be. Tags with inconsistent casing create duplicate tag pages.

A pre-flight check validates all of this before you build:

# Pseudo-code for a frontmatter validator

# Check: title exists and isn't empty

# Check: pubDate is valid ISO date

# Check: description exists, is 50-160 characters (SEO sweet spot)

# Check: heroImage path resolves to an actual file

# Check: tags are lowercase, no duplicates

# Check: all required fields present for your content schemaThis is the kind of task Claude Code handles well because it’s rule-based — read the frontmatter, compare against a schema, report violations. You can encode your content schema as a set of rules in a skill, and it checks every post before build.

The check takes seconds. Finding out your RSS feed has been broken for three weeks because of a date format issue? That takes longer to recover from. Ask me how I know.

Stage 5: Build and deploy

If you’ve gotten this far, the actual build and deploy is the easy part. It’s two commands:

# Build

npm run build

# Deploy (rsync example — adjust for your host)

rsync -avz --delete dist/ user@server:/var/www/mysite/Or if you’re on Netlify, Vercel, or Cloudflare Pages, a git push triggers the build automatically.

The value of making this a pipeline stage rather than something you do by hand: it only runs after the previous stages pass. The build doesn’t fire until the frontmatter validates. The deploy doesn’t fire until the build succeeds. Each stage is a gate, and problems get caught before they reach production.

# A simple pipeline script

#!/bin/bash

set -e # Stop on any error

echo "Running pre-flight checks..."

node scripts/validate-frontmatter.js "$1" || exit 1

echo "Building site..."

npm run build || exit 1

echo "Deploying..."

rsync -avz --delete dist/ user@server:/var/www/mysite/

echo "Published."The set -e flag is doing the heavy lifting here — any step that fails stops the entire pipeline. Your half-built site never makes it to production.

Stage 6: Distribution — email and social

Publishing to your site is half the job. If you have a newsletter, subscribers need to get the email. If you’re active on social media, you want posts about the new content.

Email platforms like Kit and Mailchimp have APIs. You can create and send a broadcast programmatically:

# Kit CLI example

kit broadcasts create --subject "New post: My Article Title" \

--content-file newsletter-version.md

# Or via API

curl -X POST https://api.kit.com/v4/broadcasts \

-H "Authorization: Bearer $KIT_API_KEY" \

-d '{"subject": "New post", "content": "..."}'Social posting is similar — most platforms have APIs or tools like Buffer’s API that let you schedule posts from the command line.

Here’s an honest admission: this is the stage I’d automate last. The email version of an article often needs different formatting than the site version — shorter, more conversational, with a different intro. And social posts need to be crafted for each platform. Full automation here can produce content that feels robotic to your audience, which defeats the purpose. Semi-automation — generating a draft social post and email version that you review before sending — is the better trade-off for most people.

What you’ll see when it’s working

A working pipeline doesn’t look dramatic. It looks like this: you finish writing, run a command, and ten minutes later the article is live on your site with a compressed hero image, valid metadata, and a draft email sitting in your Kit dashboard ready for review.

The time savings compound. Each stage you automate removes a category of errors permanently — no more broken RSS feeds, no more 6MB hero images, no more stale pubDate fields copied from a template three posts ago.

But the bigger shift is psychological. When publishing is a single command instead of a 45-minute ritual, you publish more often. And that’s the whole game, right? The friction was never the writing — it was everything after the writing.

Build it incrementally

You do not need all six stages on day one. Start with the stage that causes you the most pain.

If you keep breaking frontmatter, start with Stage 4 — the pre-flight validator. If your drafting process is slow, start with Stage 1 — the two-model pattern. If you’re spending 20 minutes per post on image generation and file management, start with Stage 3.

Add stages as the pipeline proves its value. Each new stage is an afternoon’s work to set up (mostly encoding rules you already follow manually into something a machine can check), and it pays that time back within a few uses.

A few practical starting points:

- Create a Claude Code skill for your most painful stage. Write the rules as a SKILL.md file, test it on your last three posts, refine.

- Connect two stages with a shell script. Even a three-line bash script that runs validation then build is a pipeline. It doesn’t need to be sophisticated to be useful.

- Keep a “publishing friction” log for a week. Write down every manual step and every error. The log tells you exactly where automation will help most.

The goal is fewer things to remember, fewer things to break, and more time spent on the part that actually matters — the writing.